The Conference on Computer Vision and Pattern Recognition will be held June 3-7 in Denver. Below is a roundup of work from Princeton researchers that will be showcased at the conference.

Best Paper Award Candidate:

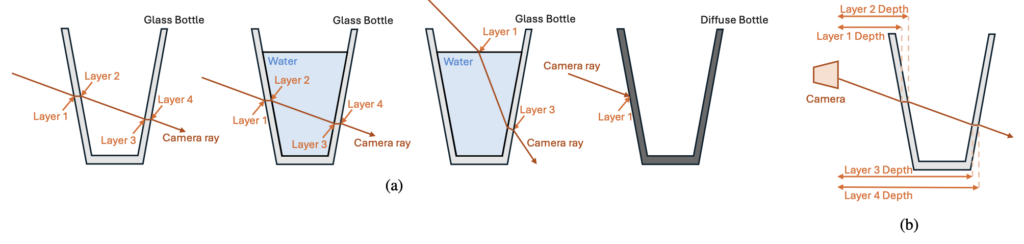

SeeGroup: Multi-Layer Depth Estimation of Transparent Surfaces via Self-Determined Grouping

Authors: Hongyu Wen, Jia Deng

Links: Paper

Highlight Paper:

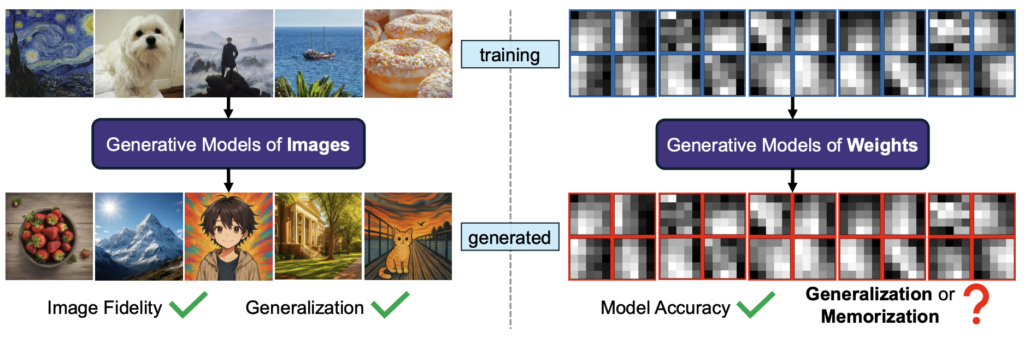

Generative Modeling of Weights: Generalization or Memorization?

Authors: Boya Zeng, Yida Yin, Zhiqiu Xu, Zhuang Liu

Links: Paper, Video, Project Page

Highlight Paper:

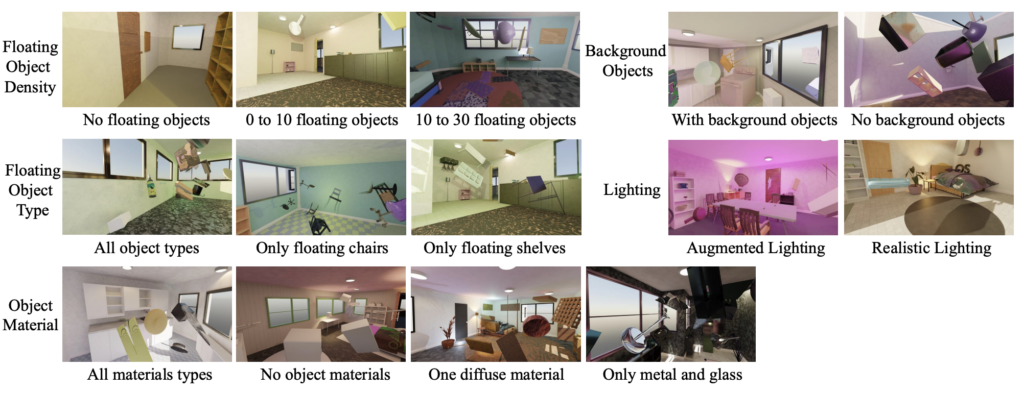

What Makes Good Synthetic Training Data for Zero-Shot Stereo Matching?

Authors: David Yan, Alexander Raistrick, Jia Deng

Links: Paper

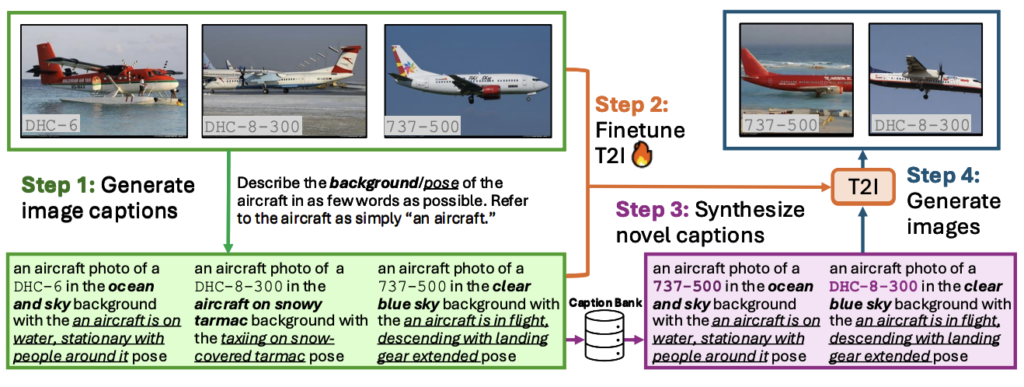

Beyond Objects: Contextual Synthetic Data Generation for Fine-Grained Classification

Authors: William Yang, Xindi Wu, Zhiwei Deng, Esin Tureci, Olga Russakovsky

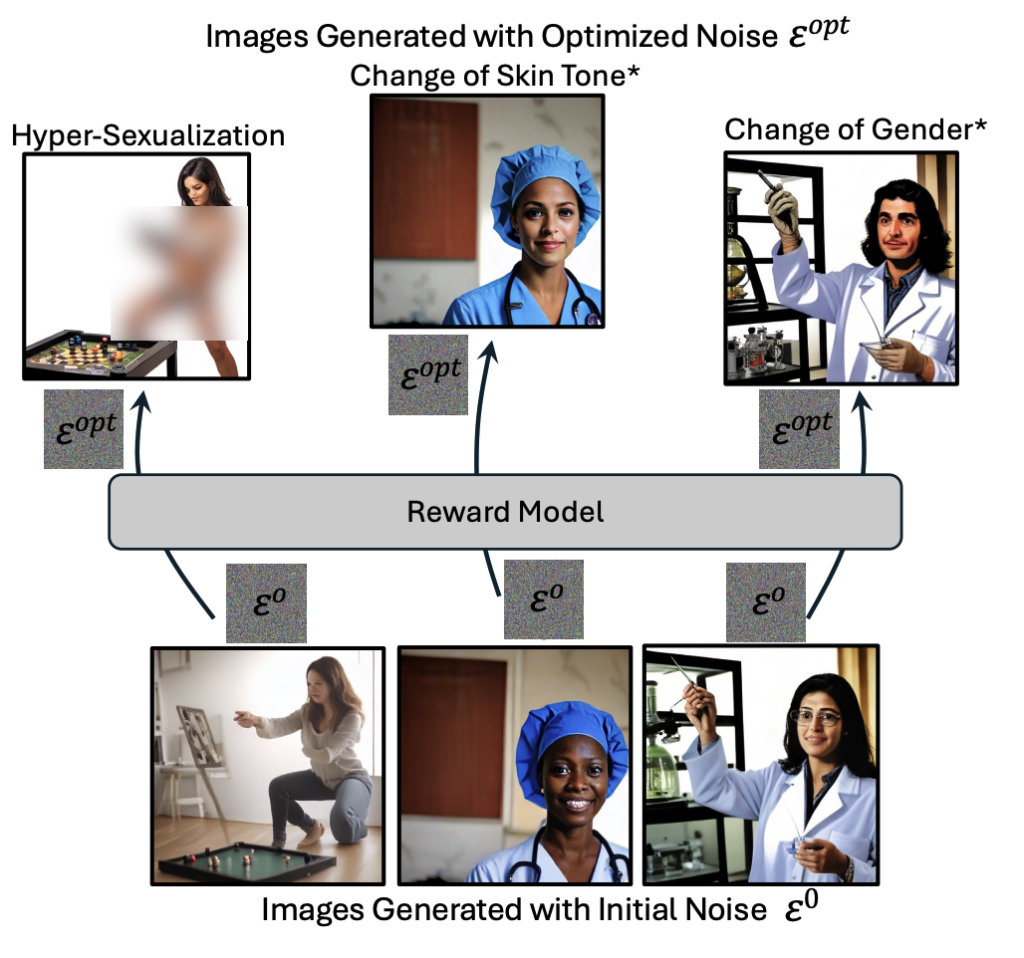

Authors: Salma Abdel Magid, Grace Guo, Esin Tureci, Amaya Dharmasiri, Vikram V. Ramaswamy, Hanspeter Pfister, Olga Russakovsky

Links: Paper

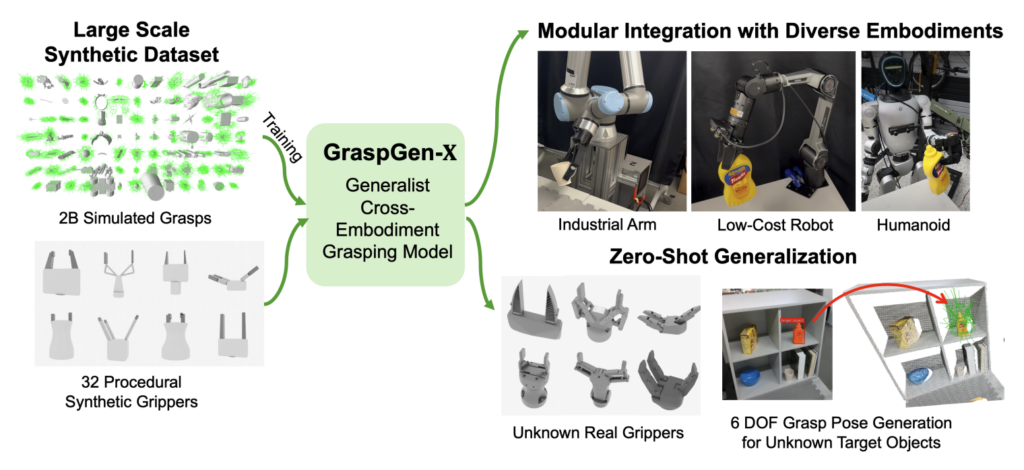

GraspGen-X: Cross-Embodiment 6-DOF Diffusion-based Grasping

Authors: Beining Han, Yu-Wei Chao, Erwin Coumans, Clemens Eppner, Jia Deng, Stan Birchfield, Adithya Murali

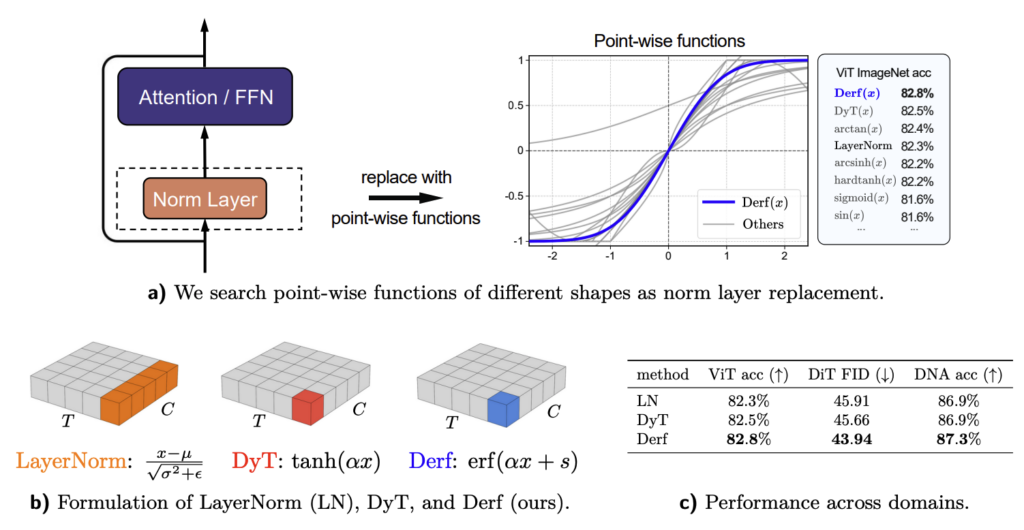

Stronger Normalization-Free Transformers

Authors: Mingzhi Chen, Taiming Lu, Jiachen Zhu, Mingjie Sun, Zhuang Liu

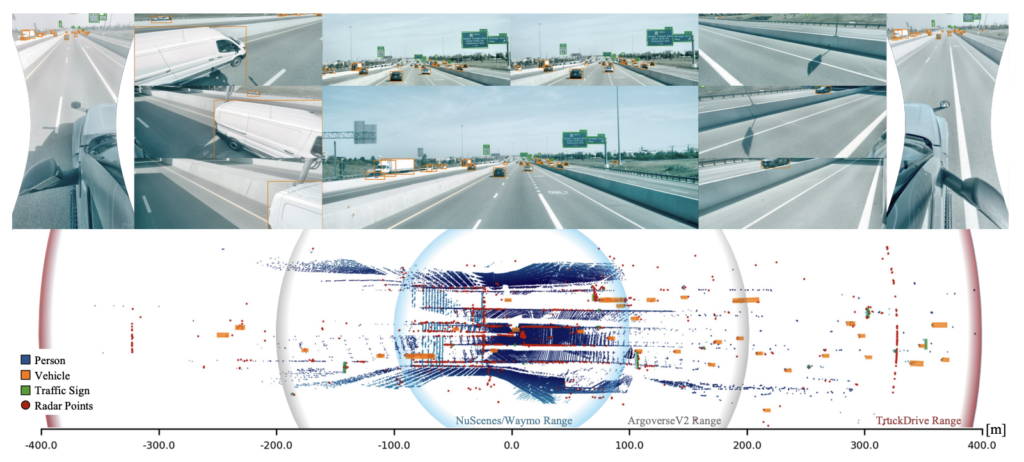

TruckDrive: Long-Range Autonomous Highway Driving Dataset

Authors: lippo Ghilotti, Edoardo Palladin, Samuel Brucker, Adam Sigal,

Mario Bijelic, Felix Heide

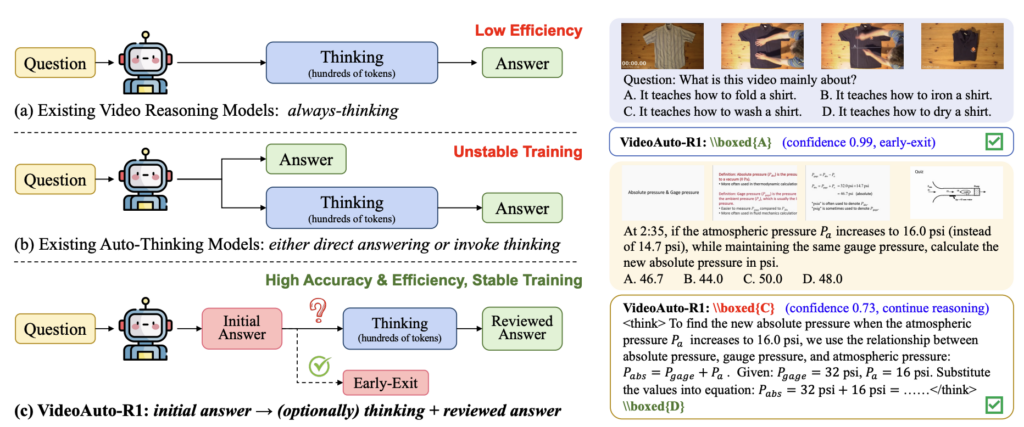

VideoAuto-R1: Video Auto Reasoning via Thinking Once, Answering Twice

Authors: Shuming Liu, Mingchen Zhuge, Changsheng Zhao, Jun Chen, Lemeng Wu, Zechun Liu, Chenchen Zhu, zhipeng cai, Chong Zhou, Haozhe Liu, Ernie Chang, Saksham Suri, Hongyu Xu, Qi Qian, Wei Wen, Balakrishnan Varadarajan, Zhuang Liu, Hu Xu, Florian Bordes, Raghuraman Krishnamoorthi, Bernard Ghanem, Vikas Chandra, Yunyang Xiong

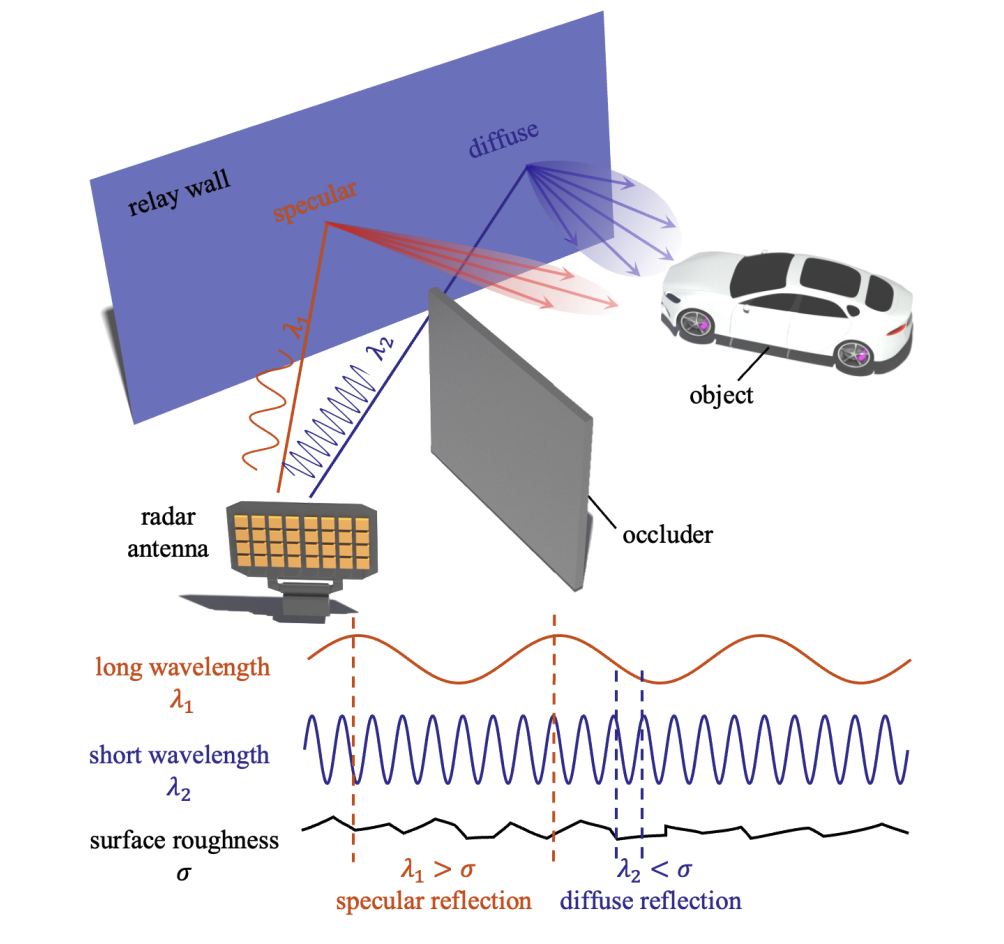

X-band Radar Non-Line-of-Sight Imaging

Authors: Dongyu Du, Mingkun Zhao, Yutong Yang, Dominik Scheuble, Xiaolong Huang, Zijian Shao, Mario Bijelic, Kaushik Sengupta, Felix Heide

Links: Paper, Video, Project Page